The Cyber Resilience Act is a new EU regulation intended to improve the cybersecurity posture of products sold in the EU/EEA area. The regulation requires producers to follow good security practices, to document security, and to provide security updates – and also to state compliance with the requiremetns in a Declaration of Conformity, as well as to assign a CE mark to the product. Vulnerability reporting becomes mandatory from 11 September 2026, and full CRA compliance and CE marking for market access becomes mandatory from December 2027.

This applies to all products with digital components that communicate with other systems, typically over a network. It does not apply in general to Open Source Software, but there are important requirements also there, especially for “open source stewards”.

The following overview is meant for software developers, product owners, cybersecurity professionals. It is written from a technical point of view, and not a legal one. For each of the requirements in Annex I, I have suggested in bullet points what “good practice looks like”. This is an opinion, and doing what I suggest here is no guarantee of future compliance. European standardization organizations are developing “harmonised standards” to support compliance. Manufacturers should refer to those when they become available.

What is the CRA and why do we need it?

The CRA is a cybersecurity requlations that applies to anyone “placing a product with digital components on the EU/EEA market”. It requires essential cybersecurity requirements and vulnerability handing requirements ot be met.

The purpose of the regulation is to help build a more cyber resilient Europe, and targets products whereas the perhaps more well-known directive NIS2 for services that are critical to society. Cybersecurity threats, ranging from nation-state intelligence operations to financially motivated organized crime, is costing society a lot and reducing the trust we have in each other, institutions and the technologies we depend on. The CRA is aiming to help reduce vulnerabilty to cyber attacks.

Does it apply to your product?

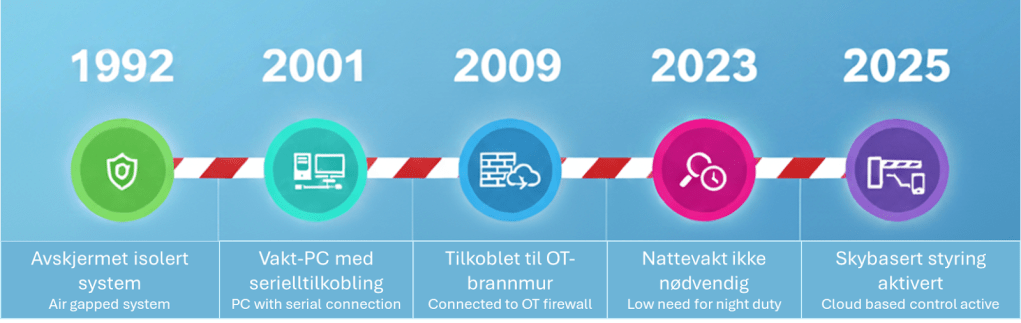

If you are making a product, the first question to determine is if the CRA applies. If the product is a software product that will be installed on a device (personal computer, smartphone, etc), or a physical product that contains software components such as a smart toaster or industrial machinery with built-in control system, it applies.

There are exceptions for products that are already regulated by other EU regulations. In particular, products in vehicles, aeroplanes, and medical devices are not covered, as well as maritime systems falling under the “Maritime Equipment Directive”. Toasters, smartphones and conveyor belts are in, boats and planes are out.

Requirements overview

The requirements of the CRA are in general “follow good security practices”. From the point of view of a manufacturer “placing products on the market”, the key requirements are found in Article 13 of the regulation, and in Annex I.

Before bringing any product with digital elements to market, manufacturers must design, develop, and produce it to meet essential cybersecurity standards. This means conducting a thorough cybersecurity risk assessment that guides every stage—from planning and design to production, delivery, and maintenance. The goal is to minimize risks, prevent incidents, and protect users, especially their health and safety. Manufacturers must also document this assessment, update it regularly during the product’s support period, and include it in the technical documentation. If third-party components (including open-source software) are used, manufacturers must ensure they don’t compromise the product’s security and must report and address any vulnerabilities found in those components. The essential cybersecuriyt requirements are listed in Annex I.

Manufacturers are also required to provide clear support periods—at least five years, or the expected lifetime if shorter, during which vulnerabilities must be actively managed. They must keep users informed about the end of support, maintain security updates for at least a decade, and ensure products can be identified and traced.

Transparency is key: users need accessible instructions, contact details, and information about risks, while authorities must have access to documentation for at least ten years after the product hits the market. If issues arise, manufacturers must act swiftly to correct them, withdraw, or recall products as needed.

Article 13 demands that good security practices are followed, and that a risk assessment is used to guide development and maintenance of the product. The product must be supported with security updates, and those must be available for at least 10 years.

Software security requirements from Annex I

The full requirements of Annex I can be found here: https://eur-lex.europa.eu/legal-content/EN/TXT/HTML/?uri=OJ:L_202402847#anx_I.

Let’s dive into the essential security requirements in Part 1 of Annex I and review what realistically must be in place to meet the requiremetns.

(1) Products with digital elements shall be designed, developed and produced in such a way that they ensure an appropriate level of cybersecurity based on the risks.

This is a broad requirement. In practice it means that you need to have a risk-based process for designing the software architecture and implementation. A good process would include the following aspects:

- Define the user stories or main functional requirements of the component

- Define baseline security requirements

- Perform threat modeling/risk assessment using a structured and documented approach. Often a software-centric threat modeling method will be a good approach during design (such as STRIDE), supported by a product level risk assessment that is threat driven (asset-threat-vulnerability). Define mitigations based on the risk, and design test cases for them, especially if they are logic related and not purely related to bugs/implementation errors.

- Make sure your software design is well-documented and that risk treatments are traceable.

- Regularly update the risk assessment based on changes in the external environment, the software it self, or its use cases.

The second requirement (2) contains all the prescriptive security requirements of Annex I. We will go through these presecriptive requirements one-by-one with a short assessment of what will need to be done to meet it.

(2) On the basis of the cybersecurity risk assessment referred to in Article 13(2) and where applicable, products with digital elements shall:

Click on the arrows to expand each requirement and see the interpretation of the requirement.

(a) be made available on the market without known exploitable vulnerabilities;

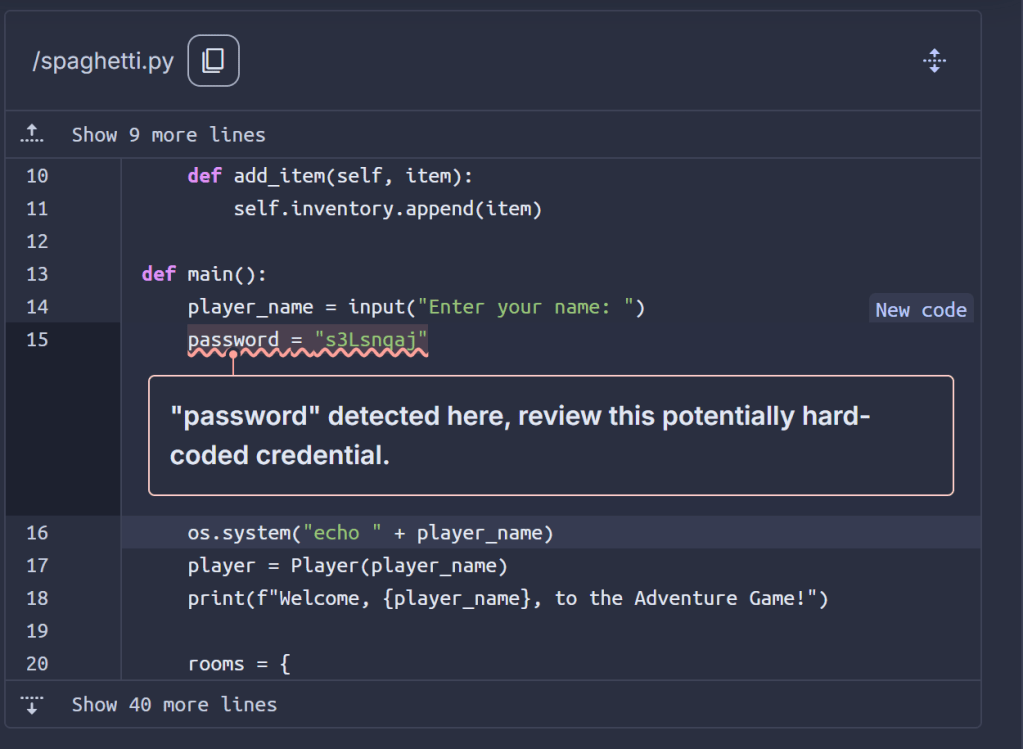

- Keep track of all dependencies and their vulnerabilities – requiring a “software bill of materials”. The build or requirements system will typically take care of dependency tracking – like npm or pip.

- Have a process or system to detect known vulnerabilities, especially “exploitable” ones. Following public catalogs such as the known exploited vulnerabilities from CISA and vendor security bulletins is necessary for this. Patch such vulnerabilities as-soon-as-possible and before releasing new versions.

- Vulnerabilities can also exist in your own code. To satisfy the requirement you must hence also have good security testing practices. This would typically include static analysis (good at catching bugs), and security tests as part of unit and integration testing. You may also use AI coding agents to analyse code for vulnerabilities as part of your build process – this can be very efficient.

- Perform penetration testing and active runtime testing regularly as part of your software assurance and maintenance processes.

(b) be made available on the market with a secure by default configuration, unless otherwise agreed between manufacturer and business user in relation to a tailor-made product with digital elements, including the possibility to reset the product to its original state;

- Secure defaults means that you need to configure data access, user accounts, etc. in a secure way on release, and provide instructions to the user on how to use it in the secure configuration. This may make things more difficult at setup, for example when multi-factor authentication is reuqired.

- The product shoould have a “factory reset” mechanism. This means that if you install the product in a secure configuration, and then as a user change it to unsafe configuration, reverting to the default secure state should be easy.

- Exception: development of software for others, where the buyer (customer) is responsible for security if they want to “place it on the market”.

(c) ensure that vulnerabilities can be addressed through security updates, including, where applicable, through automatic security updates that are installed within an appropriate timeframe enabled as a default setting, with a clear and easy-to-use opt-out mechanism, through the notification of available updates to users, and the option to temporarily postpone them;

- Design the system so that security updates can be provided automatically when possible

- Provide the user with a way to opt-out temporarily

- Notify users of available updates and how long the can postpone. It is best to integrate this as part of the software if it is end user focused, but if it is an IoT application or otherwise not frequently used by people another mechanism should be chosen, for example alert emails.

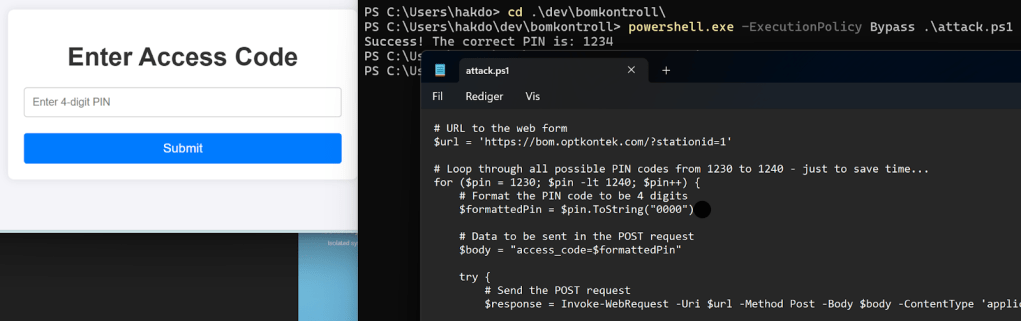

(d) ensure protection from unauthorised access by appropriate control mechanisms, including but not limited to authentication, identity or access management systems, and report on possible unauthorised access;

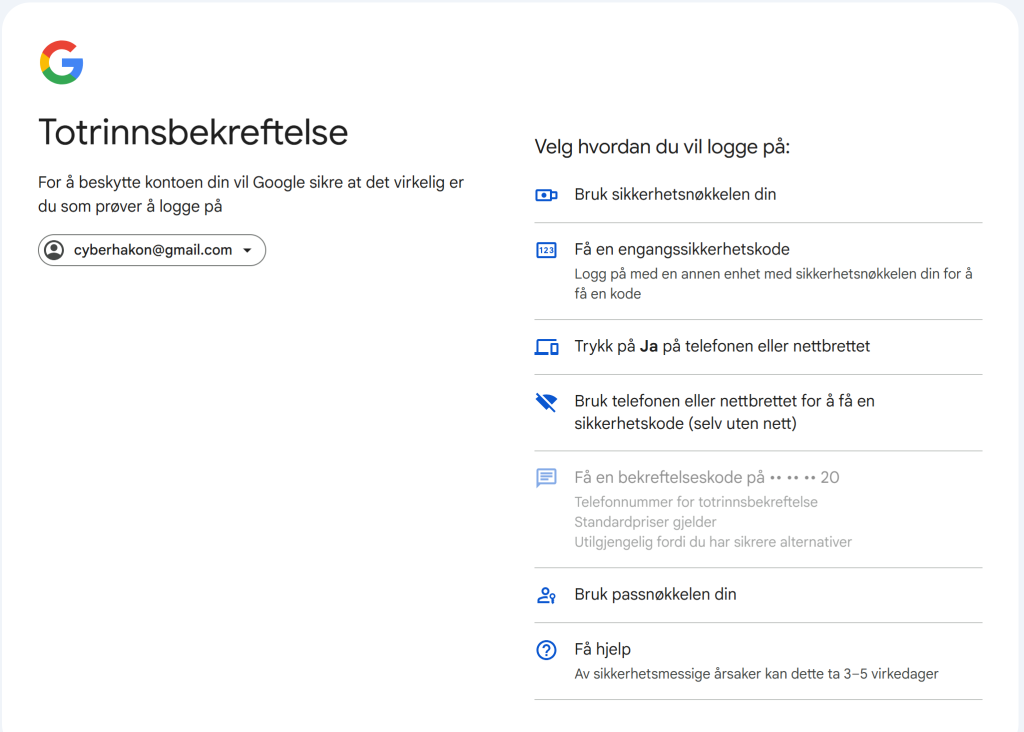

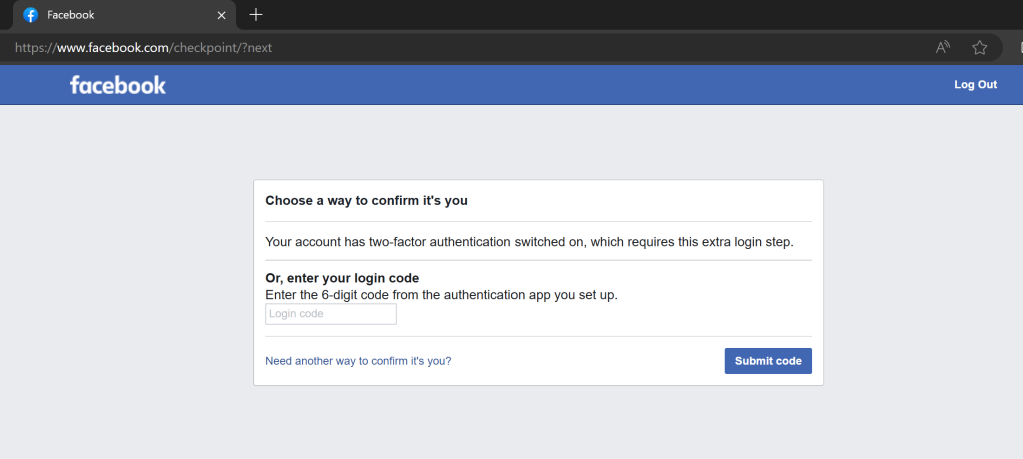

- Provide strong authentication mechanisms – typically by MFA, passkeys, and similar mechanisms

- Build in detection capabilities for identity breaches – either directly in the product or facilitate such detection throu log analytics in an external environment if appropriate (like a SIEM)

- Create an access model for various roles (RBAC) if appropraite and enforce it.

- Provide defensive mechanisms to temporarliy reduce access if there are indicators of account braches

- Provide logs of suspicious authentication and access atttempts

- Log authorization changes made by an administrator

- Map the access control mechanisms to the risk assessment

(e) protect the confidentiality of stored, transmitted or otherwise processed data, personal or other, such as by encrypting relevant data at rest or in transit by state of the art mechanisms, and by using other technical means;

- Use TLS or other encryption methods for network transport. Make sure to only support up-to-date ciphers

- Use disk encryption for data stored on the device. Note that this only protects data when the machine is powered off, if not the read/write access is provided through the operating system.

- Provide file system level or row-level database encryption if so required based on the risk assessment.

- Ensure handling of certificates and encryption keys is done in a secure and robust way

- Monitor cryptographic recommended practices and update accordingly when needed, for example if vulnerabilities are discovered or more powerful crypto analysis attacks become available. Consider the use of quantum safe crypto.

(f) protect the integrity of stored, transmitted or otherwise processed data, personal or other, commands, programs and configuration against any manipulation or modification not authorised by the user, and report on corruptions;

- Enable use of integrity control mechanisms for files and on-disk data through hash controls and similar mechanims. You may be able to use operating system level mechanisms for this.

- TLS provides integrity control for data in transit through message integrity checks (HMAC for example)

- Within the application, changes to data can be logged. To avoid creating too high volume of logs, a threat model should be used to decide the details to include and types of events to log.

- To “report on corruptions” it is good practice to provide this in logs that can be exported ot a sink to avoid a threat actor manipulating important lgos.

(g) process only data, personal or other, that are adequate, relevant and limited to what is necessary in relation to the intended purpose of the product with digital elements (data minimisation);

- Decide which data will need to be process in the application and document this

- Include data handing in risk assessment

- Develop data retention policies with automatic deletion where appropriate

(h) protect the availability of essential and basic functions, also after an incident, including through resilience and mitigation measures against denial-of-service attacks;

- Based on risk assessment, plan and design failover and redundancy mechanisms

- Include denial-of-service cases in risk assessment and build sufficient mitigation against it

- Consider the addition of emergency access mechanisms in case of a serious incident blocking normal access. This can be through a separate interface, or a local “breaking glass account”. In the design of such mechanisms avoid any hardcoding of the access, and make sure use of such mechanisms are logged and detected.

(i) minimise the negative impact by the products themselves or connected devices on the availability of services provided by other devices or networks;

- Include as a case in risk assessmen that a threat actor has taken control of the device and is using it to attack other devices. Build in protections against such abuse cases.

- Limit the allowable egress protocols from the product to those that are necessary for the products functioning. Operating system features and software firewalls may be useful for this.

- Where the products user interface does not intend for the user to have low-level access to the operating system or network stack, include the possibility of vulenrability exploitation causing such access for a threat actor in the risk assessment.

- Design retry mechanisms using network calls to avoid sending too many messages in a short time

(j) be designed, developed and produced to limit attack surfaces, including external interfaces;

- As part of the software design, enumerate and evalauate the attack surface from both external (network access) and internal (low-privileged user) perspectives.

- Minimize access points as required

- Disable debugging interfaces in a secure way when the product is in production mode. Make this part of the secure configuration design.

- Include every entry point in the attack surface in the risk assessment and evaluate against expected abuse cases

(k) be designed, developed and produced to reduce the impact of an incident using appropriate exploitation mitigation mechanisms and techniques;

- Provide detection capabilities to discover cyber incidents and exploitation attempts

- Design the system in a modular way, to allow for automated isolation of likely compromised subcomponents

- Use separation of concerns to ensure complexity does not make partial isolation and containment unrealistic

- Harden the underlying operating system to reduce the impact of incidents, especially to avoid escalation of privileges

- Provide guidance in documentation to incident responders to help with detection, forensics and containment

(l) provide security related information by recording and monitoring relevant internal activity, including the access to or modification of data, services or functions, with an opt-out mechanism for the user;

- Set up robust logging practices in the application

- Use a threat model/risk assessment to determine which events will need to be logged, and if there should be any internal log filtering/rate limiting

- Ideally provide a standardized logging mechanism to integrate with security tooling, such as using operating system logs or making standarized logs available over an API.

- Create clear recommendation for logging and log integration practices

- Allow the user to configure their logging in a transparent way – including turning all logging off, or some logging. The level of user control should be designed to fit the risk and use cases for the product.

(m) provide the possibility for users to securely and easily remove on a permanent basis all data and settings and, where such data can be transferred to other products or systems, ensure that this is done in a secure manner.

- Create a “data export” feature to allow users to configure another product or export the data for use in another system

- Create a way for users to remove/delate all data and settings in the product

This sums up the essential cybersecurity requirements. Tap each requirement to see recommended practices. These are based on my experience with application and device (mostly from OT) security. There will be “harmonised standards” available to prove compliance with CRA requirements – these will provide guidance on how to satisfy the requirements. There is no guarantee that my “quick and dirty empirical suggestions” will align with those (future) standards.

Vulnerability management requirements from Annex I

You will need to shape up your vulnerability handling process if you have not already done so; Manufacturers of products with digital elements shall:

(1) identify and document vulnerabilities and components contained in products with digital elements, including by drawing up a software bill of materials in a commonly used and machine-readable format covering at the very least the top-level dependencies of the products;

- Use tools to create the SBOM – there are both commercial and open source tools available for this. THe SBOM should be in a machine-readable format. CycloneDX and SPDX are common formats that can also be read by editor and IDE plugins.

- Integrate dependency tracking in the CI/CD pipeline of the software, and freeze and SBOM for each release version

- Include top-level dependencies at minimum, but aim to capture transitive dependencies where feasible, and ensure the SBOM is updated with every product release or significant change. Note that deep dependency-trees are generally unsafe and could be considered in breach of the attack surface minimization requirement in Part 1 of Annex I.

(2) in relation to the risks posed to products with digital elements, address and remediate vulnerabilities without delay, including by providing security updates; where technically feasible, new security updates shall be provided separately from functionality updates;

- Create a process to issue security updates fast. The updates should go through the software assurance process to ensure quality, including testing for regressions.

- When updating dependencies, make sure to perform thorough testing if there are breaking API changes in the dependencies.

- Push security updates independently of your feature release cycle – unless you work on a frequent update schedule. If you update the system for features once per year, you cannot use that to push security updates.

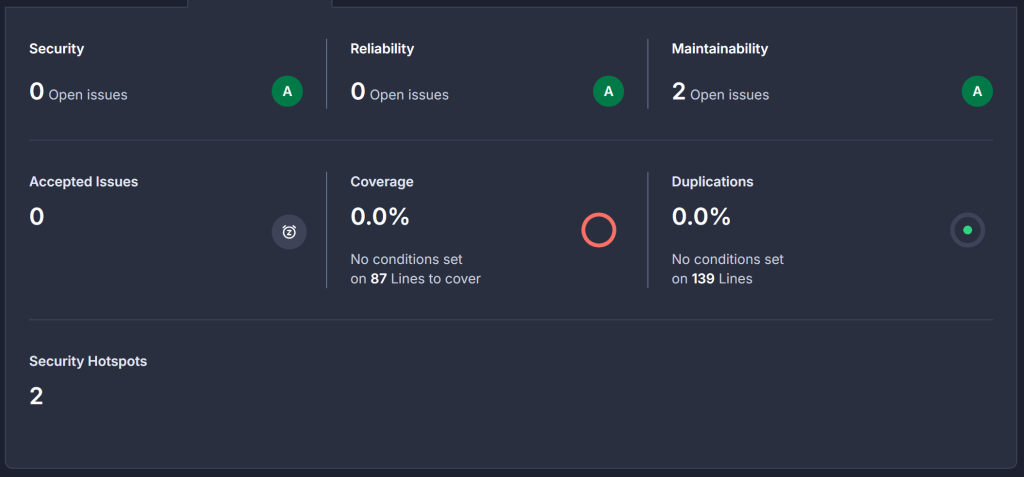

(3) apply effective and regular tests and reviews of the security of the product with digital elements;

- Integrate testing in development and build process, including unit/integration tests, static analysis and SBOM updates

- Perform regular runtime level testing (pentesting)

- Conduct architectural reviews regularly and with every major change – referencing an architectural best practice description. Use this as basis for updating risk assessments/threat models.

(4) once a security update has been made available, share and publicly disclose information about fixed vulnerabilities, including a description of the vulnerabilities, information allowing users to identify the product with digital elements affected, the impacts of the vulnerabilities, their severity and clear and accessible information helping users to remediate the vulnerabilities; in duly justified cases, where manufacturers consider the security risks of publication to outweigh the security benefits, they may delay making public information regarding a fixed vulnerability until after users have been given the possibility to apply the relevant patch;

- In general, provide security bulletins online

- If providing the information to the public is a high risk to product users, you may delay publishing the vulnerability until users have updated. In this case, make sure to document the decision and provide sufficient help for customers to get systems patched.

(4) once a security update has been made available, share and publicly disclose information about fixed vulnerabilities, including a description of the vulnerabilities, information allowing users to identify the product with digital elements affected, the impacts of the vulnerabilities, their severity and clear and accessible information helping users to remediate the vulnerabilities; in duly justified cases, where manufacturers consider the security risks of publication to outweigh the security benefits, they may delay making public information regarding a fixed vulnerability until after users have been given the possibility to apply the relevant patch;

(5) put in place and enforce a policy on coordinated vulnerability disclosure;

- Establish a clear policy to the public on how to report security vulnerabilities. Provide secure channels for doing so, for example by providing a public encryption key

- Define internal workflows for triaging, validating, and addressing reported vulnerabilities, including roles and responsibilities, escalation paths, and timelines for fixes, ensuring alignment with industry standards like ISO/IEC 29147 (coordinated disclosure) or ISO/IEC 30111 (vulnerability handling).

(6) take measures to facilitate the sharing of information about potential vulnerabilities in their product with digital elements as well as in third-party components contained in that product, including by providing a contact address for the reporting of the vulnerabilities discovered in the product with digital elements;

- See also the items under (5)

- Also recommended: consider adding a /.well-known/security.txt file on the web site

- Include information on vulnerability disclosure in the product documentation

(7) provide for mechanisms to securely distribute updates for products with digital elements to ensure that vulnerabilities are fixed or mitigated in a timely manner and, where applicable for security updates, in an automatic manner;

- If possible and safe, plan for “updates-over-the-air” but allow the user to configure and potentially postpone such updates

- Provide updates online if the suer needs to perform a download and update manually

- Make sure update mechanisms are well-protected to avoid supply-chain breaches. Include SHA256 hashes of any updates, and mechanisms to verify patch downloads before installation

(8) ensure that, where security updates are available to address identified security issues, they are disseminated without delay and, unless otherwise agreed between a manufacturer and a business user in relation to a tailor-made product with digital elements, free of charge, accompanied by advisory messages providing users with the relevant information, including on potential action to be taken.

- Provide security updates as soon as they have been developed, tested and released

- Ensure the patch development process is not a bottleneck

- Provide informaiton on updates to registered customers with a push mechanism (emails, etc)

- For “custom software made as a consultant”, make sure the responsibilites for handling distribution of security updates is agreed in the contract.

Documentation requirements

The technical documentation reuqired for CE conformity assessment is found in Annex VII: https://eur-lex.europa.eu/legal-content/EN/TXT/HTML/?uri=OJ:L_202402847#anx_VII.

The Cyber Resilience Act (CRA) mandates that manufacturers of products with digital elements maintain technical documentation to prove compliance with its essential cybersecurity requirements. This documentation, detailed in Annex VII, must be prepared before placing a product on the market and kept updated throughout its support period. It serves as the foundation for conformity assessments and must be made available to market surveillance authorities upon request.

The technical documentation ensures transparency and accountability, enabling authorities and users to verify that products meet the CRA’s security standards. It also supports traceability, vulnerability management, and ongoing compliance.

The documentation is meant to show how the requirements in Article 13 and Annex I are met. It should containt he following:

- General description of the product, its intended purpose, and security properties

- The risk assessment for the product

- Design, manufacturing, and operational details, including cybersecurity risk assessments

- Test reports and evidence of conformity with Annex I requirements

- Information on vulnerability handling processes and software bill of materials (SBOM)

- User instructions and security-related information for safe installation, use, and decommissioning

- The declaration of conformity (CE)

What about open source?

The CRA does not apply to open-source software not made in a commercial context.

If you integrate open source components in your product (and we all do, right?), you need to exercise “due diligence” to make sure the component does not compromise the security of your product, according to Article 13:

(5) For the purpose of complying with paragraph 1, manufacturers shall exercise due diligence when integrating components sourced from third parties so that those components do not compromise the cybersecurity of the product with digital elements, including when integrating components of free and open-source software that have not been made available on the market in the course of a commercial activity.

(6) Manufacturers shall, upon identifying a vulnerability in a component, including in an open source-component, which is integrated in the product with digital elements report the vulnerability to the person or entity manufacturing or maintaining the component, and address and remediate the vulnerability in accordance with the vulnerability handling requirements set out in Part II of Annex I. Where manufacturers have developed a software or hardware modification to address the vulnerability in that component, they shall share the relevant code or documentation with the person or entity manufacturing or maintaining the component, where appropriate in a machine-readable format.

This means that open-source component should be vetted before used.

- Is it an actively maintained project?

- Does it have well-known maintainers and “stewards” supporting development and maintenance?

- Do they have available secuirity information, metrics, etc?

Paragraph (6) under Article 13 also states you should share code or documentation to aid in patching. A good way of doing that is submitting a patch as a pull request in open source projects.

You may also want to consider if the maintainer of the component has good security practices, including account practices. Compromised maintainer accounts on repositories such as PyPi and NPM have resulted in many supply-chain breaches over the last years. This can be hard to know anything about but increasingly stronger security requirements of code sharing platforms are improving the security of these platforms.

If you are one of the key maintainers of an open-source software, you are considered an “open-source steward” under the CRA. The rules require open-source stewards to create secure development policies and to collaborate with market surveillance authorities in order to help build a more secure ecosystem, but they are not subject to penalties for non-compliance. They can’t put you in jail for submitting a bad pull request, or failing to publish a security.md on your Github project.

Activities needed to be able to CE mark your product

The road to CE conformity depends on the classification of your product. Many of the requiements are the same no matter what (those listed above) but the way to “prove” to the market you have done the required things depends on the type of product. There are two key processes that need to be put in place – they should be documented and repeatable to ensure quality:

- A secure development process

- A vulnerability handling process

There are 3 types of products in the CRA: (1)Important products (Class 1 and Class 2) – a list of IT security relevant products defined in Annex III, and (2) Critical products – hardware devices with security boxes, smart meter gateways and high-security cryptographic units, and smartcards and similar products, and finally (3) Products, which is everything else.

If your product is not important or critical, you can generally “self-ceritfy” (Module A). The CRA has 4 modules for conformity assessment – A, B, C and H.

The “modules” refer to standardized conformity assessment procedures defined in the EU’s New Approach Directives and adopted in regulations like the CRA. They outline how manufacturers can demonstrate that their products meet legal requirements. In the CRA, these modules are detailed in Annex VIII and include:

- Module A (Internal production control): The manufacturer performs all assessments internally and issues a self-declaration of conformity.

- Module B (EU-type examination): A notified body examines the product’s technical design and issues an EU-type examination certificate.

- Module C (Conformity to type): The manufacturer ensures their products conform to the approved type (certified under Module B) and issues a declaration of conformity.

- Module H (Full quality assurance): The manufacturer operates a comprehensive quality system covering design, production, and testing, with regular audits by a notified body.

These modules provide flexibility, allowing manufacturers to choose the appropriate level of third-party involvement based on the product’s risk class. For Important products class 1, self-declaration is allowed if harmonised standards are used. If not, they may follow a CE marking route B+C or H.

GAP assessment

Start with a GAP assessment where the requirements of Annex I are measured against:

- Software development practices and competence to perform the work at the required level. OWASP SAMM is a good baseline for this.

- Does the manufacturer have a good product level risk assessment?

- Is there a threat modeling or risk assessment practice in the SDLC to aid in developing security mechanisms and test cases?

- Assess the product design and architecture against the requirements in Annex I

- Assess whether vulnerability handling procedures according to Part 2 of Annex I are defined and in place

It is not expected that the GAP assessment shows zero gaps. One needs to be honest and use the GAP assessment to plan the roadmap towards compliance.

Software development practice implementation

Implement good security practices in software engineering, from developer traininig and competence, quality assurance, test routines, CI/CD, and SBOM generation.

Assign ownership and accountability for security work in design and development.

Risk assessment

Create or update the risk assessment to support product development and vulnerability management. A good security oriented risk assessment at product level should take into account threat actors and their capabilities, vulnerability classes and the types of assets and exposure in the product.

Documentation, DoC and CE marking

Draw up the documentation in accordance with Annex VII. Issue a DoC (Declaration of Conformty) and affix the CE mark to the product. Keep the documentation and DoC in archive for 10 years.

A bit of (free) advertising: Want to discuss the CRA more in detail? Contact TÜV Rheinland (where I work): https://www.tuv.com/world/en/cyber-resilience-act.html, or if you are in Norway, send an email to inquiry@safetec.tuv.com or reach out to me directly on LinkedIn: https://www.linkedin.com/in/hakondo/. This blog post is not sponsored or written on behalf of my employer.

– Håkon.