Modern operating systems have robust systems for creating audit trails built-in. They are very useful for detecting attacks, and understanding what has happened on a machine.

Not all the features that are useful for security monitoring are turned on by default – you need to plan auditing. The more you audit, the more visibility you gain in theory, but there are trade-offs.

- More data gives you more data – and more noise. Logging more than you need makes it harder to find the events that matter

- More data can be useful for visibility, but can also quickly fill up your disk with logs if you turn on too much

- More data can give you increased visibility of what is going on, but this can be at odds with privacy requirements and expectations

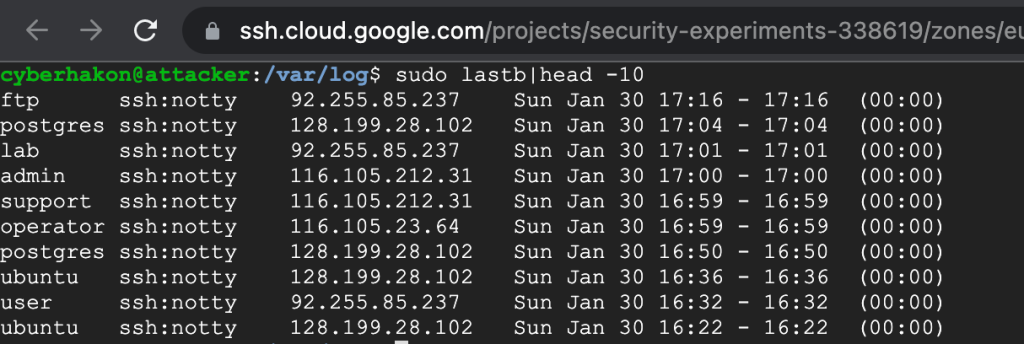

Brute-force attacks: export data and use Excel to make sense of it

Brute-force attacks are popular among attackers, because there are many systems using weak passwords and that are lacking reasonable password policies. You can easily defend against naive brute-force attacks using rate limiting and account lockouts.

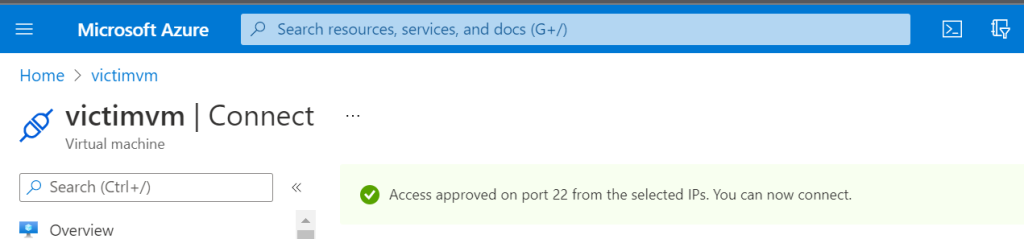

If you expose your computer directly on the Internet on port 3389 (RDP), you will quickly see thousands of login attempts. The obvious answer to this problem is to avoid exposing RDP directly on the Internet. This will also cut away a lot of the noise, making it easier to detect actual intrusions.

Logon attempts on Windows will generate Event ID 4625 for failed logons, and Event ID 4624 for successful logons. An easy way to detect naive attacks is thus to look for a series of failed attempts in a short time for the same username, followed by a successful logon for the same user. A practical way to do this, is to use PowerShell to extract the relevant data, and export it to a csv file. You can then import the data to Excel for easy analysis.

For a system exposed on the Internet, there will be thousands of logons for various users. Exporting data for non-existent users makes no sense (this is noise), so it can be a good idea to pull out the users first. If we are interested in detecting attacks on local users on a machine, we can use the following Cmdlet:

$users = @()

$users += (Get-LocalUser|Where-Object {$_.Enabled})| ForEach-Object {$_.Name}

Now we first fetch the logs with Event ID’s 4624, 4625. You need to do this from an elevated shell to read the events from the Windows security log. For more details on scripting log analysis and making sense of the XML log format that Windows is using, please refer to this excellent blog post: How to track Windows events with Powershell.

$events = Get-WinEvent -FilterHashTable @{LogName="Security";ID=4624,4625;StartTime=((Get-Date).AddDays(-1).Date);EndTime=(Get-Date)}

Now we can loop over the $events variable and pull out the relevant data. Note that we check that the logon attempt is for a target account that is a valid user account on our system.

$events | ForEach-Object {

if($_.properties[5].value -in $users) {

if ($_.ID -eq 4625) {

[PSCustomObject]@{

TimeCreated = $_.TimeCreated

EventID = $_.ID

TargetUserName = $_.properties[5].value

WorkstationName = $_.properties[13].value

IpAddress = $_.properties[19].value

}

} else {

[PSCustomObject]@{

TimeCreated = $_.TimeCreated

EventID = $_.ID

TargetUserName = $_.properties[5].value

WorkstationName = $_.properties[11].value

IpAddress = $_.properties[18].value

}

}

}

} | Export-Csv -Path <filepath\myoutput.csv>

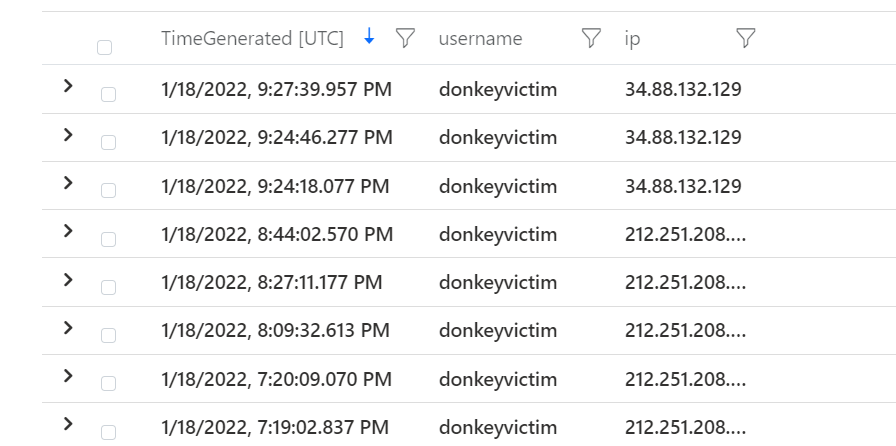

Now we can import the data in Excel to analyze it. We have detected there are many logon attempts for the username “victim”, and filtering on this value clearly shows what has happened.

| TimeCreated | EventID | TargetUserName | LogonType | WorkstationName | IpAddress |

| 3/6/2022 9:26:54 PM | 4624 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:54 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:53 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:53 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:53 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:26:53 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:38 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4624 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

| 3/6/2022 9:23:37 PM | 4625 | victim | Network | WinDev2202Eval | REDACTED |

Engineering visibility

The above is a simple example of how scripting can help pull out local logs. This works well for an investigation after an intrusion but you want to detect attack patterns before that. That is why you want to forward logs to a SIEM, and to create alerts based on attack patterns.

For logon events the relevant logs are created by Windows without changing audit policies. There are many other very useful security logs that can be generated on Windows, that require you to activate them. Two very useful events are the following:

- Event ID 4688: A process was created. This can be used to capture what an attacker is doing as post-exploitation.

- Event ID 4698: A scheduled task was created. This is good for detecting a common tactic for persistence.

Taking into account the trade-offs mentioned in the beginning of this post, turning on the necessary auditing should be part of your security strategy.