Cybersecurity awareness training has become a central activity in many firms. It takes time, requires planning and management follow-up, and is very often mandatory for all employees. But does it work? That depends – first and foremost on people’s feelings towards cybersecurity.

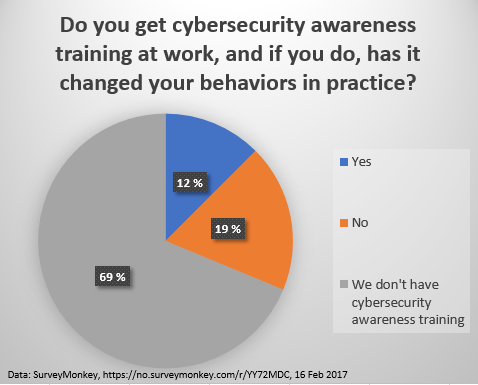

A very informal survey in my network shows that most people don’t receive any awareness training at all at work, and among those that do, there are more people who say it does not change their behaviors, than those that think it has had a positive impact.

At the end of last year I participated in a local meeting in the Norwegian Association for Quality and Risk Management, where I heard a very interesting talk by Maria Bartnes (Twitter: @mariabartnes) from SINTEF on user behaviors and cybersecurity training. She argued that training is only effective if people are motivated for the training – and for that they need to have beliefs and goals that are well aligned with the organization they are a part of. She portrayed this in a matrix with various employee stereotypes, with “feelings towards policies and company goals” on one axis and “risk understanding” on the other axis – which I found was a very effective way of communicating the fact that all employees are not created equal 🙂 . You have people ranging from technical risk experts that love the company and policies they are working for, and you have people who don’t understand risk at all, and at the same time are feeling angry or resentful towards both their company and its policies – and you have everything in between.

Another issue is that many organizations tend to make training mandatory and the same for all. It makes little sense to force your experts to sit through basic introductions that are second nature to them anyway – a lot of knowledge workers experience this when HR departments push e-learning modules to all employees.

What does it all mean?

Some people have argued that security awareness training is completely useless. This is probably going a bit too far but there are clear limits to what can be achieved by “training” of any kind when it comes to changing people’s behaviors. We use computers by habit – the way we act when we read e-mails, research the internet, write Word documents or compile code – it is all “second nature” when you are experienced at it. Changing those habits is hard and it does not happen automagically through training.

Focusing on motivation and feelings is a good start – without the motivation to do so, it is very unlikely that users that exhibit risky behaviors will make any effort to change those behaviors.

Continuous effort is needed to change behaviors, to create new habits. This means that employees must not only receive the knowledge about the “why” and the “how”, but they must also attain practical knowledge by doing. When we realize that, we see that it becomes very important not to demotivate employees that already have positive feelings about cybersecurity. Forcing the highly motivated and technically competent to take very basic e-learning lessons may kill that motivation – and thus increase your organizations risk exposure.

It also becomes very important to motivate those that are feeling resentful, both the technically competent ones, and those in the “worst-case corner” of resentful and low technical competency. Motivation comes before technical know-how.

For cybersecurity awareness training to have a positive effect it is thus necessary to tailor the contents to each employee based on skills and motivation. Further, the real work really starts after the training – it is the action of “doing” that changes habits, not the mere presentation of information about phishing e-mails and strong passwords. This means you need leadership, and you need change agents.

Use your technically skilled and highly motivated people as change agents. They can help motivate others, and they can exemplify good behaviors. Let the these supercyberusers support management, and educate management. And bring the managers on board on following up security regularly – not to outsource it to the IT department. Entertaining abuse cases for discussion in meetings can help, as well as publicly praising employees that make an effort to bring the maturity of both their own security practices, and the security maturity of the company as a whole to a new level.

Summary

Make sure you adapt your training to both motivation and technical skills of those who receive it. See maturity work in the area of cybersecurity as a part of your organization’s continuous improvement program – embed it in the way your organization works instead of relying solely on information campaigns. Use change agents and inspiring leaders in you organization to change the way the organization behaves from the individual to the firm as a whole. That is the only way to success with building security awareness that actually changes behaviors.