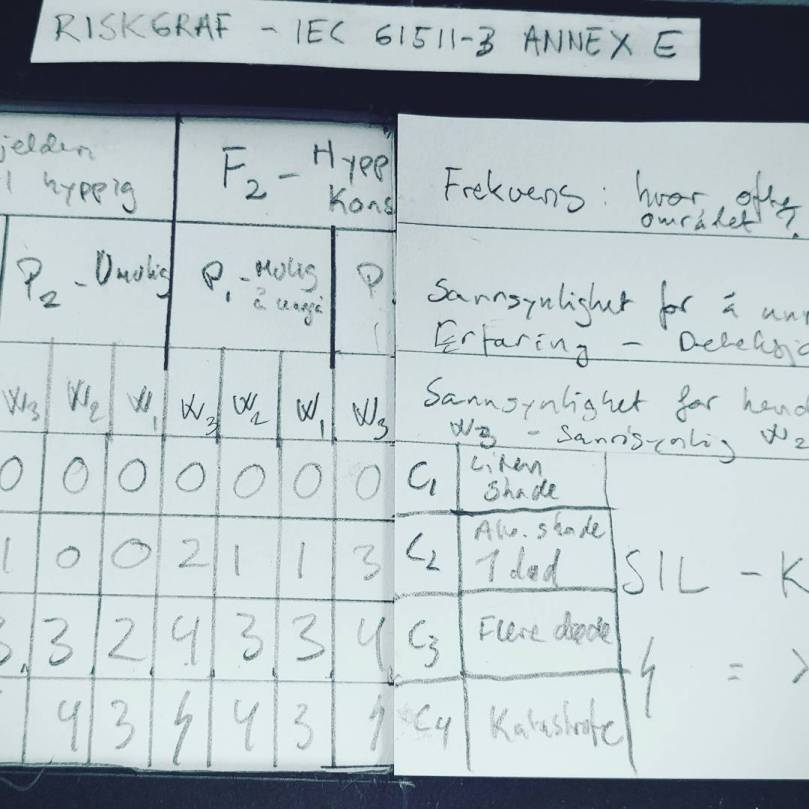

Risk management is a topic with a large number of methods. Within the process industries, semi-quantitative methods are popular, in particular for determining required SIL for safety instrumented functions (automatic shutdowns, etc.). Two common approaches are known as LOPA, which is short for “layers of protection analysis” and Riskgraph. These methods are sometimes treated as “holy” by practicioners, but truth is that they are merely coginitive aids in sorting through our thinking about risks.

In short, our risk assessment process consists of a series of steps here:

- Identify risk scenarios

- Find out what can reduce the risk that you have in place, like design features and procedures

- Determine what the potential consequences of the scenario at hand is, e.g. worker fatalities or a major environmental disaster

- Make an estimate of how likely or credible you think it is that the risk scenario should occur

- Consider how much you trust the existing barriers to do the job

- Determine how trustworthy your new barrier must be for the situation to be acceptable

Several of these bullet points can be very difficult tasks alone, and putting together a risk picture that allows you to make sane decisions is hard work. That’s why we lean on methods, to help us make sense of the mess that discussions about risk typically lead to.

Consequences can be hard to gauge, and one bad situation may lead to a set of different outcomes. Think about the risk of “falling asleep while driving a car”. Both of these are valid consequences that may occur:

- You drive off the road and crash in the ditch – moderate to serious injuries

- You steer the car into the wrong lane and crash head-on with a truck – instant death

Should you think about both, or pick one of them, or another consequence not on this list? In many “barrier design” cases the designer chooses to design for the worst-case credible consequence. It may be difficult to judge what is really credible, and what is truly the worst-case. And is this approach sound if the worst-case is credible but still quite unlikeley, while at the same time you have relatively likely scenarios with less serious outcomes? If you use a method like LOPA or RiskGraph, you may very well have a statement in your method description to always use the worst-case consequence. A bit of judgment and common sense is still a good idea.

Another difficult topic is probability, or credibility. How likely is it that an initiating event should occur, and what is the initating event in the first place? If you are the driver of the car, is “falling asleep behind the wheel” the initating event? Let’s say it is. You can definitely find statistics on how often people fall asleep behind the wheel. The key question is, is this applicable to the situation at hand? Are data from other countries applicable? Maybe not, if they have different road standards, different requirements for getting a driver’s license, etc. Personal or local factors can also influence the probability. In the case of the driver falling asleep, the probabilities would be influenced by his or her health, stress levels, maintenance of the car, etc. Bottom line is, also the estimate of probability will be a judgment call in most cases. If you are lucky enough to have statistical data to lean on, make sure you validate that the data are representative for your situation.Good method descriptions should also give guidance on how to do these judgment calls.

Most risks you identify already have some risk reducing barrier elements. These can be things like alarms and operating procedures, and other means to reduce the likelihood or consequence of escalation of the scenario. Determining how much you are willing to rely on these other barriers is key to setting a requirement on your safety function of interest – typically a SIL rating. Standards limit how much you can trust certain types of safeguards, but also here there will be some judgment involved. Key questions are:

- Are multiple safeguards really independent, such that the same type of failure cannot know out multiple defenses at once?

- How much trust can you put in each safeguard?

- Are there situations where the safeguards are less trustworthy, e.g. if there are only summer interns available to handle a serious situation that requires experience and leadership?

Risk assessmen methods are helpful but don’t forget that you make a lot of assumptions when you use them. Don’t forget to question your assumptions even if you use a recognized method, especially not if somebody’s life will depend on your decision.