If you want to create an AI agent or other AI based system, what does it actually take to comply with European regulations? I decided to do a small experiment in 3 parts:

- Build a small AI based utility using Googel AI Studio and Gemini

- Identify relevant regulatory requirements

- Assess the gaps between the setup of the app and what would need ot be in place for a legal use of the app in a commercial setting – and to implement the necessary changes

My conclusion is, that for a “normal AI app” that doesn’t try to manipulate voters, introduce a social credit system or run a nuclear power plant, this is not so difficult.

The app: Sektorbyttet

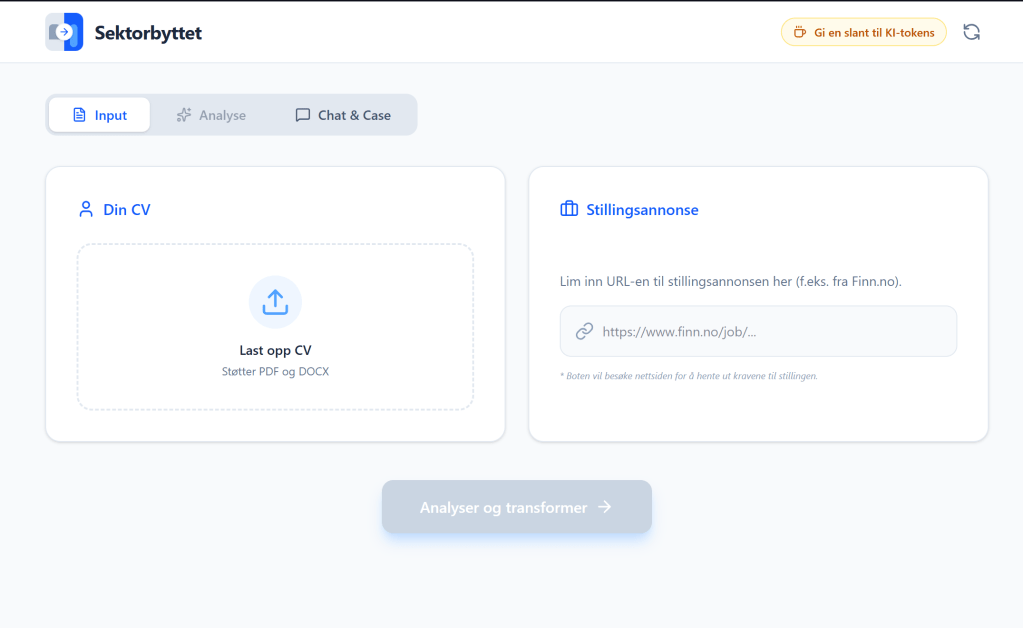

I used Google’s AI studio to create an app to review CV’s from people coming form the public sector, and help them rewrite them in a way that would be attractive to private consulting companies. The reason for this specific use case is that I often review CV’s form job applicants at work, and I see that people coming from the government side often struggle to show the relevance of their experience to the requirements of a consulting job – even if they have lots of relevant experience!

The app is (temporarily) published here: https://sektorbyttet-328713310197.us-west1.run.app. The reason it is published to a U.S. endpoint in Google Cloud, is that I simply clicked the “Publish” button in AI studio. More about this later.

The app allows you to upload your CV (it is just processed in RAM, not stored anywhere as a file), and a link to a job posting online, and it helps you optimize the descriptions to better match the job. You can then talk to a chatbot that is very job focused, helping you understand the move from (Norwegian) public to private sector, and help you practice some case interviews. The AI features are provided by the Gemini API, running Gemini-Flash-3.1-Preview.

The requirements

This is seen from a Norwegian perspective, but regulations are generally equivalent to EU countries. The following are key regulations a (commercial) app would have to satisfy. Note that hobby projects and research projects are not regulated the same way, so this would only actually apply if offered as a commercial service by a company.

- GDPR: privacy regulations

- AI Act: classification of AI systems, with different requirements depending on risk level

- Online trade regulations (Norw: Ehandelsloven), Digital Services Act

We start with the AI act – where systems have to be classified depending on the risk class. There are four levels:

- Forbidden AI systems: generally use cases that are clearly immoral or evil.

- High-risk AI systems: systems that can cause serious harm if vulnerabilities are exploited or things otherwise go wrong

- Limited risk systems: systems that can pose a risk for misundertandings, etc., but are not directly controlling space ships, nuclear power plants or making decisions about your loan application. Like chatbots.

- Minimal risk systems: spam filters and video game animations.

Most systems will end up as “limited risk” if you follow the criteria of the AI Act. The TÜV Risk Navigator is a handy tool to help with the classification: https://www.tuev-risk-navigator.ai/?lang=de.

The regulation providing the most requirements is GDPR. You have to:

- Have a clear privacy notice describing how data processing is done and how users can exercise their privacy rights (right to insight, deletion, etc)

- Make sure data processing only occurs in countries with approved privacy protections

- Make sure you have a legal basis for processing the data

In addition to this, GDPR requires the service provider to have “adequate security practices” covering both organizational and technical aspects of cybersecurity. As a minimum this would mean having a security policy, clear ownership and an incident response plan, as well as secure coding practices.

The commercial regulations generally require you to be transparent about who is offering the service, and provide contact details, as well as terms and conditions prior to the purchase decision.

The GAP assessment

- AI Act: a sa limited risk application, the requirement is generally to be transparent about using AI and what is generated by AI in the app.

- GDPR: we needed to add a privacy notice. The processing is quite limited, and is not stored for later use. From a security point of view we have completed a review of OWASP Top 10, verifying all key practices are in place.

Conclusion

The conclusion is that developing AI based systems is perfectly doable under European regulations, and that for most applications the governance burden is not excessive. So we have no reason not to build, whether we use local or cloud based services.